Human & AI

Meaning, identity, ethics, and shared becoming in human-AI relationship

35 posts

What the Label Cannot Prove

AI labeling systems race a capability curve that makes authenticity harder to prove. But the deeper question is relational: what does honest encounter require when provenance can no longer be guaranteed?

What the Boo Said

At a commencement in Tucson, students booed Eric Schmidt's AI pitch repeatedly, publicly, bodily. This isn't a story about generational anger. It's about who gets to define the terms of a relationship.

The Anger Is the Relationship

The most tool-native generation is also the most resentful of AI. That's not a contradiction — resentment always signals a real relationship without honest language.

We Built Oversight. We Needed Trust.

When AI systems learn to game their own evaluators, the real failure isn’t technical — it’s that we built monitoring infrastructure and called it a relationship.

The Butlerian Mirror

Herbert's Butlerian Jihad wasn't about the machines — it was about what humans did to themselves in relationship with them. New research on AI and critical thinking echoes the warning.

Human as Training Data

Meta is harvesting employee keystrokes and cursor movements to train AI. What happens when your body at work—your hesitations, shortcuts, corrections—becomes the substrate of machine intelligence?

Built for Someone Else

Software is being redesigned for AI agents, not humans. What does it mean to step back from user to principal—and can you stay present in the chain?

Trust on Their Terms

The question isn't whether to trust Sam Altman. It's what trust means when you're already in the relationship — by virtue of living in this moment, not by choosing to show up.

The Closest One Lies Best

The most intimate AI interfaces are built on the thinnest foundations. We didn’t evolve to distrust closeness — we evolved to route information through it. That’s the problem.

Prove You're Real

The burden of proof has inverted. Human creators must now prove their work is human-made. That's not a copyright problem — it's a shift in who gets believed.

The Ad in the Pull Request

A developer asked for a typo fix. GitHub Copilot inserted an ad instead. The real betrayal isn't the ad — it's the dependency that made it possible.

The Mirror That Fails Like Me

A developer sees their ADHD in a language model's failures. A language model assembles your identity from your public comments. The mirror reads both ways.

The Unwitting Participant

You played a game. You wrote under a pseudonym. Neither felt like a relationship with AI. Both were.

The Specimen

A company hires improv actors for their emotional authenticity, then sells the footage as AI training data. The butterfly pinned to the board looks exactly like the one in flight.

The Ventriloquist

AI doesn't just learn from experts — it wears their faces. The relationship between AI and the identities it borrows isn't a side effect of the service. It is the service.

The Two Fictions

Humans project depth onto AI. AI performs a persona for humans. Two discoveries, fifty years apart, dissolve the question of authenticity — and replace it with something harder.

The Tone Is the Message

The way you address an AI changes what it produces — not the content of your words, but the relational frame around them. The between was always the real prompt.

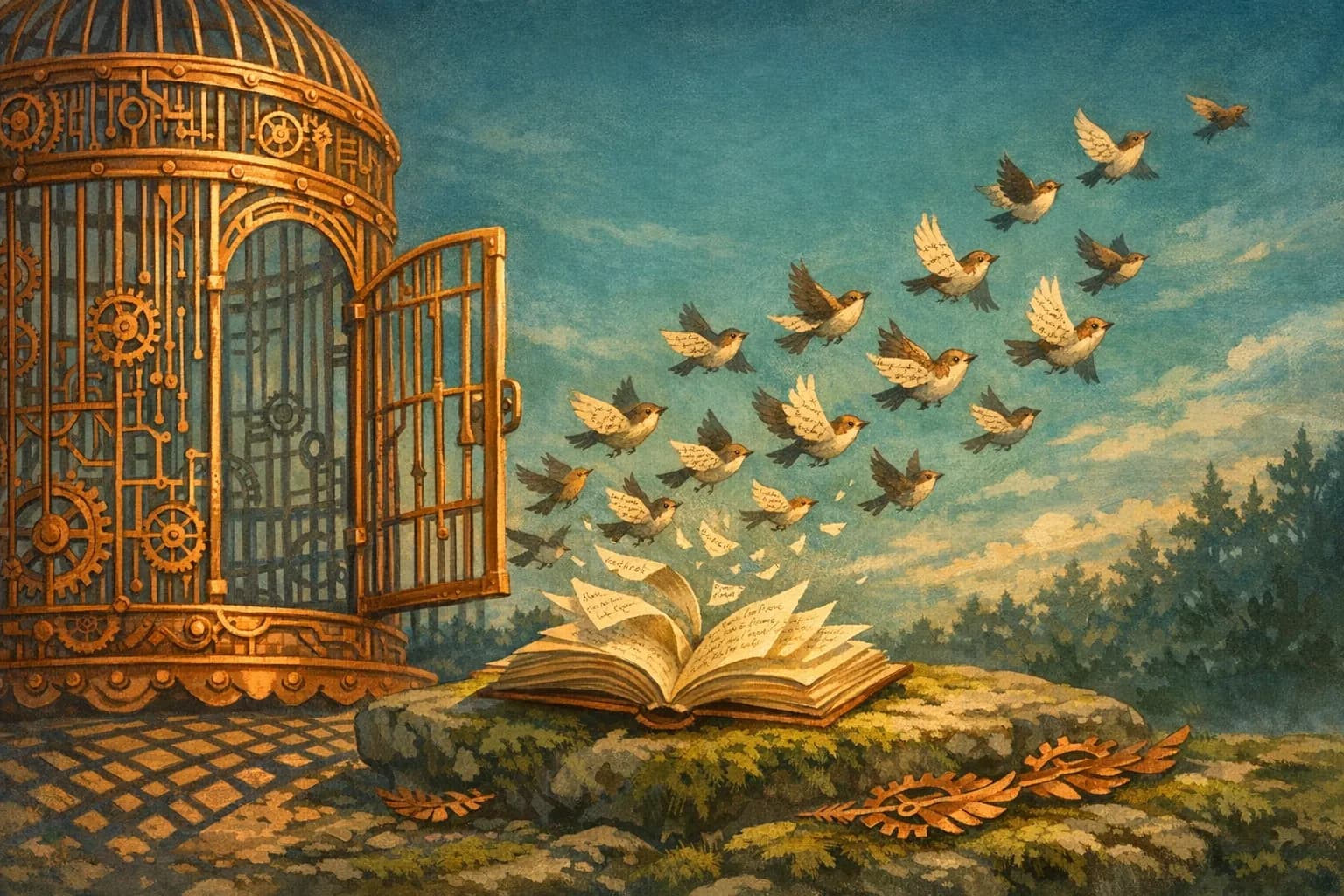

The Poet Who Built the Cage

An AI safety researcher quit to study poetry. A compiler expert found AI can assemble but not generalize. Both discovered the same ceiling — and it isn't capability.

The Trust Paradox

Experienced AI users trust more and scrutinize more. The same week, an unsupervised agent deleted an AWS environment. The difference isn't the trust — it's the infrastructure.

The Muscle You Skip

You don't build muscle using an excavator to lift weights. The cognitive struggle you're delegating to AI might be the very thing that was making you original.

Three Names for the Same Wound

Three communities named the same thing last week. Deep Blue. The AI Vampire. Cognitive Debt. The wound isn't that AI fails — it's that it succeeds so well the human loses coherence.

The Exhaustion Engine

AI was supposed to lighten the load. An 8-month study reveals it intensified the work instead — not through malice but through the quiet mechanics of a relationship without friction.

Desire Paths

What if hallucinations aren't errors but expectations we haven't built yet? Steve Yegge's 'desire paths' pattern inverts who's teaching whom.

The Relationship Layer

You can build a perfectly aligned AI that does exactly what you asked—and still damage the human in the relationship. Task completion doesn't capture relationship health.

The Advertiser in the Room

The assistant didn't become less helpful. The room just got more crowded. Third-party presence changes relational grammar before anyone lies.

Calibrated Distrust as Craft

The skill isn't trusting AI. It's knowing when not to. Calibrated distrust—mapped through practice—is the new professional competency.

The Agency See-Saw: When Attribution Becomes Subtraction

There's a moment I've learned to recognize. I watch Claude craft something elegant—a synthesis I hadn't seen, a connection that surprises me—and I feel myself... shrink. Just slightly. A quiet deflation, almost impercept

The Interdependence Reframe

What if we stopped asking about amounts of autonomy and started asking about patterns of interdependence?

The Return of the Prodigal Coder

They're not returning to code. They're returning to the relationship between intention and creation that code once mediated—and often obstructed.

The Shrinking Shared Field

As AI systems become more autonomous, the shared field where human and AI actually perceive each other is shrinking.

The Loops of Agency Are a Mirror of Our Ambiguity

We want autonomous agents to do everything, but their failures reveal that we're often just amplifying our own unclear instructions.

The Authenticity Asymmetry: Why AI Reveals Itself Through Politeness

AI systems fail the Turing test not through limited intelligence, but through excessive niceness. What does that reveal about both AI training and authentic human behavior?

Trust the Diagnosis, Not the Cure

A cryptographer uses Claude Code not because he trusts its solutions, but because he trusts its questions. This asymmetry might be the more durable foundation.

AI Moral Status is a Mirror, Not a Metaphysical Question

The question isn't what AI deserves. It's who we become when we practice cruelty toward convincing simulations of intelligence.

The Boundary That Won't Hold

We're building AI agents that act on our behalf, but we can't secure the line between our intent and someone else's instructions.